BakeBuddy

This project has developed a recipe app that recognizes in-air gestures via the iPhone's camera, translating specific gestures into commands for navigating recipes, controlling timers, or performing other functions. The app aims to offer easy-to-master gesture commands, complemented by tutorials and real-time feedback to reduce operational errors, making cooking more enjoyable, convenient, and efficient.

Skills

Design Research

Prototyping

Sketching

Style Guide Design

Usability Testing

User Flow Diagramming

User Interface (UI) Design

Wireframing

Tools

Figma

Adobe After Effects

Project Timeline

January2024 - March2024(6weeks)

Program and Role

California College of the Arts

Interaction Design | NUI and Objects

| Professor Graham Plumb

Merma Ma (Interaction Design and UI Design)

Introduction

Gestures are a form of bodily movement that occur naturally and often unconsciously during interactions with others, adding depth to conversations. Applying this physical language to digital devices allows iPhone users to control applications through gestures without touching the device, resulting in cleaner and more interactive exchanges.

This project's challenge is to design a gestural interface and language to build a seamless and instinctive mode of user engagement.

The Problem

In my daily life, I have a profound passion for cooking, which led me to explore mobile recipe applications as a problem domain.

A pervasive issue I've identified is that traditional interaction methods with recipe apps require physical contact with the device. This not only disrupts the cooking flow but also introduces additional inconvenience and time consumption due to the frequent need to clean hands, thereby affecting the overall cooking experience.

How might we design a gesture-controlled recipe app that uses the iPhone's camera to recognize gestures, making cooking more intuitive and enjoyable, while ensuring it's easy for users to learn and reduce cooking errors?

Introducing BakeBuddy

Design Process

During observation, I noticed that my friends used a variety of gestures, including conscious gestures (such as brushing hair away when it obstructs their view or gestures to amplify their voice) and unconscious gestures (such as fidgeting with fingers or touching their face).

To design new interactive gestures, it's essential to understand how people use these "symbols" in communication. I studied both intentional and unintentional gestures. Additionally, by recording a conversation between me and my friends for about 30 minutes, I observed some of our deliberate and spontaneous gestures. I then discovered that some gestures have unique characteristics that might be a form of resonance shared between individuals. For instance, certain gestures may be related to language and emotional states. These gestures can provide additional cues that help us better understand the intentions and emotions of others.

Researching Gestures

Representing Gestures

Before developing a gesture control system, it was crucial to first identify and design the types of controls needed, leading me to rapidly brainstorm potential functions and draft preliminary gesture designs. Throughout this process, I encountered challenges in ensuring that the gestures were intuitive, understandable, and easy to remember. For these functions, I designed a series of simple and intuitive gestures aimed at enabling users to quickly grasp and learn them without the need for additional explanations. Making these gestures easily understandable for users from diverse cultural and linguistic backgrounds was particularly important for designing an easy-to-use interface, ensuring that users could fully utilize the app. Through testing with users, including feedback on sketches and animations, I gathered valuable insights on the intuitive understanding and execution capability of the gestures. This iterative process aimed to develop a gesture language that naturally integrates into the cooking experience without distracting, thereby enhancing the interactive experience during cooking.

Echo and Semantic Feedback

In interactive designs like gesture interfaces, providing clear feedback is essential for a positive user experience. It enhances usability, reduces errors, and boosts user confidence by confirming actions and encouraging further exploration. For instance, in a gesture-based recipe app, immediate visual feedback through animations shows users that their gestures are recognized, with changes in colors and positive indicators confirming correct input. Additionally, semantic feedback offers insights into the outcomes of actions, aiding users in understanding the app's responses to their gestures. This dual feedback approach ensures users feel confident and informed during their interaction with a gesture-driven system, fostering a seamless and satisfying experience without physical contact.

User Flow Design and Wireframing

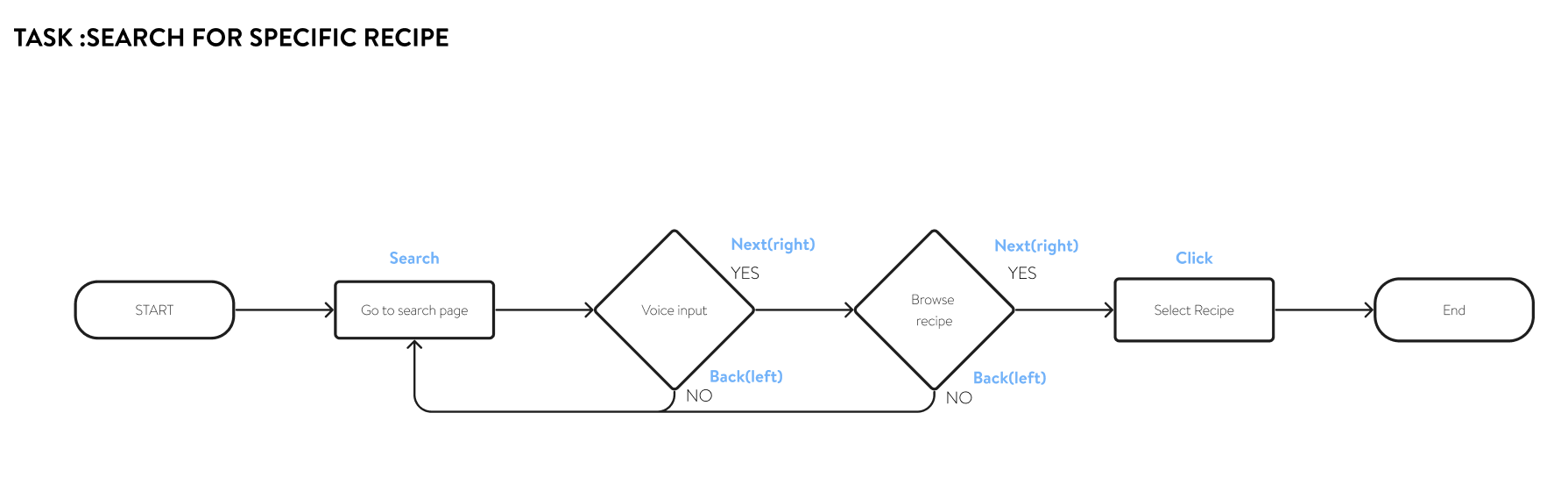

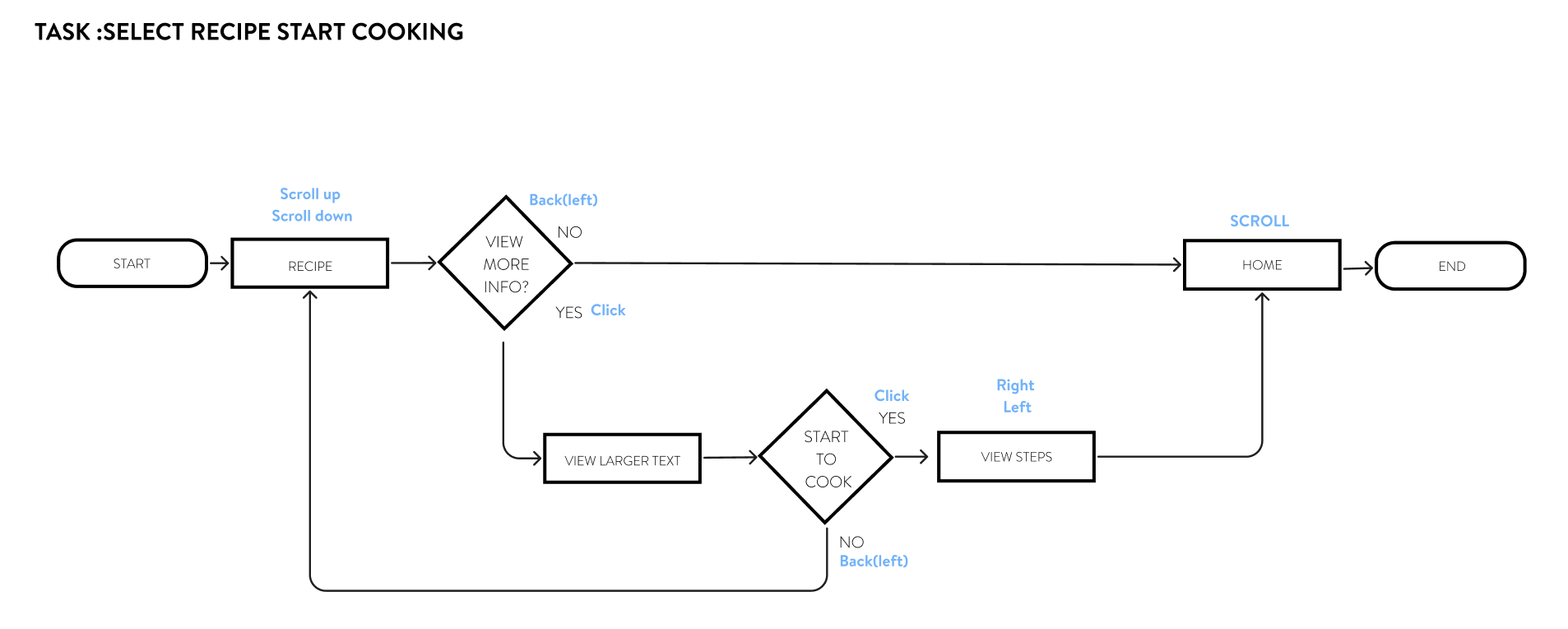

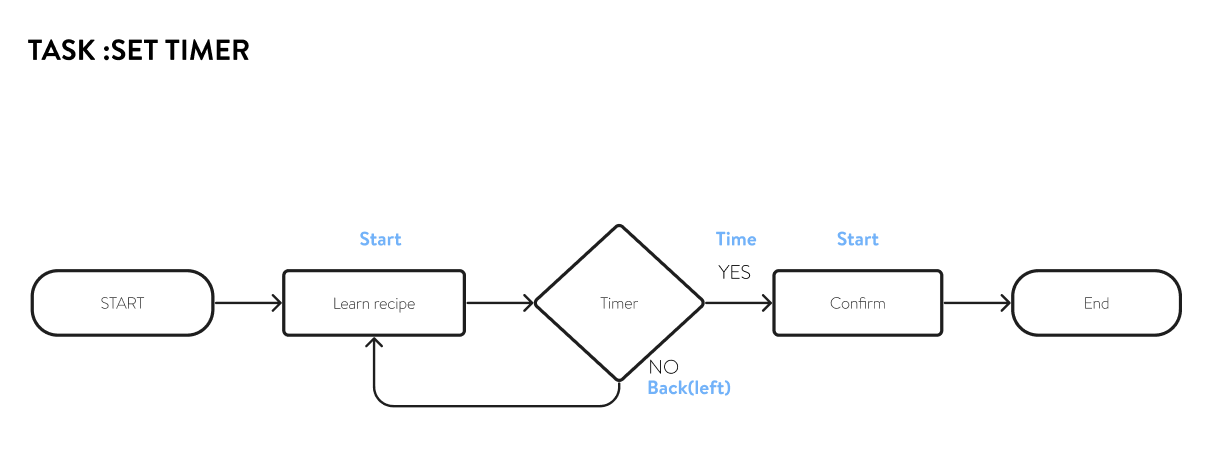

A User Flow diagram is a tool that outlines the steps a user needs to take within an app or website to accomplish a task. It's crucial for planning interactive experiences because it not only helps to clearly understand the user's path of action, but also allows me to identify decision points the user encounters while using the product. By doing so, I can optimize steps that may confuse users, creating a more direct and efficient user journey. User Flow diagrams enable me to identify potential problems before development starts, reducing rework and ensuring a smooth and pleasant user experience.

After designing each task flow, I began working on the core visual design for BakeBuddy. The first few sets of wireframes I designed for BakeBuddy were primarily focused on the onboarding and initial setup processes.

By creating wireframes, I can clearly define the functionality of the app, ensuring that the layout includes all necessary components. This approach makes the iteration process of the design both simple and cost-effective, as wireframes are relatively easy to create and modify. As a fundamental step in the early stages of design, wireframes provide a clear architectural blueprint for my app. By stripping away complex design elements such as colors and images, they help me focus on optimizing the layout structure and the relationships between pages, as well as functionality and user flow. Doing so not only ensures that users can navigate in a logical and efficient manner but also allows me to clearly communicate my design intentions and plans to others. More importantly, wireframes enable me to test the navigability and usability of the interface early in the project.

Style Guide and Branding

The final step in refining the BakeBuddy concept involves developing its style guide, which consolidates the product's aesthetic into a unified visual identity.

When creating the style guide for a cooking recipe application with a theme of enthusiasm and vitality, I chose orange as the color for the call-to-action buttons, set against a black background to establish a bold visual identity. Orange, symbolizing energy and passion, was selected as the primary brand color to evoke the joy and excitement of cooking for the users while using the app. The black background provides strong contrast, making the orange color stand out even more. Meanwhile, I utilized white space to create a more comfortable and refreshing visual effect, with the orange accents in white popup backgrounds particularly eye-catching, serving to emphasize key content.

Usability Testing and Revisions

I developed a script to minimize the impact of potential biases or leading questions, thereby providing more reliable and objective results. This allows me to conduct tests more smoothly and ensure that all necessary aspects are covered without wasting time, ensuring the efficiency and consistency of the entire process and yielding valuable insights. By doing so, I ensure that my product meets the real needs and expectations of users, laying a solid foundation for creating a successful, user-centered product.

Closing Thoughts

Creating a gesture-based interaction project allowed me to learn how to use new software, After Effects (AE), and also gave me a deeper understanding of user behavior and preferences. Through observing and analyzing how users interact with technology via gestures, I not only mastered technical tools but also gained a deeper insight into user needs. This process challenged my creative thinking, forcing me to consider the user's perspective on how they physically interact with the interface, greatly fostering my empathy.

Exploring the design of gesture interactions made me realize that innovative interaction methods can not only enhance the user experience but also solve practical usage scenario problems. This form of interaction design expanded my understanding of design, showing me the potential of seamlessly integrating users' natural movements and behaviors into digital products. Through this project, what I learned was not just how to use new design tools but, more importantly, how to deeply understand users and creatively solve design challenges, making technology more human-centric, intuitive, and inclusive.